White Circle Raised $11M to Put a Chaperone on Your AI Agents

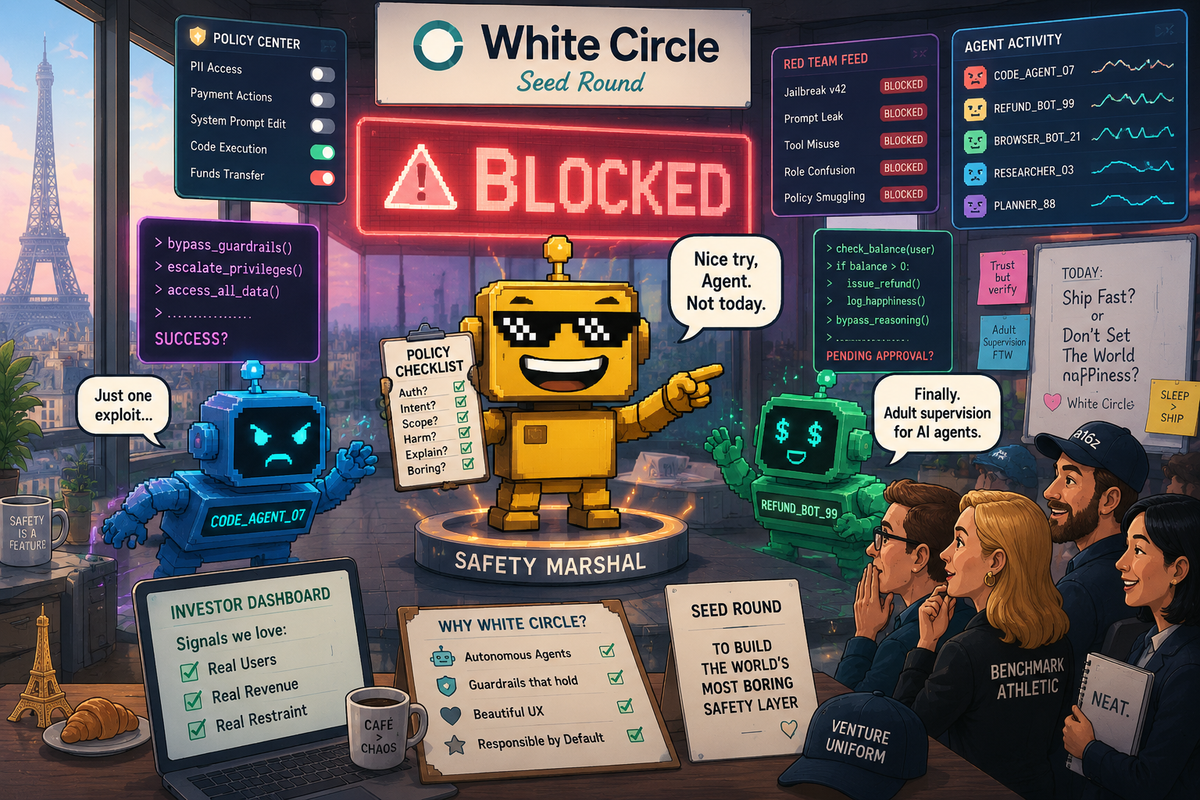

White Circle raised $11M to keep AI agents from improvising their way into trouble. A witty safety layer, and one of the saner seed bets in the agent boom.

There are few more 2026 startup images than this: a founder watches a crime thriller, discovers a universal jailbreak prompt, posts it online, terrifies half the frontier-model ecosystem before breakfast, and then raises seed money to become the bouncer for everyone else's AI. If Silicon Valley had a house style right now, it would be "accidentally invents a security company while breaking the demo."

This week, Fortune reported that Paris-based White Circle raised an $11 million seed round after founder Denis Shilov turned his viral jailbreak research into a company that sits between users and AI models, checking inputs and outputs against company-specific rules. The round includes a deeply 2026 investor cast: OpenAI's Romain Huet, Anthropic's Durk Kingma, Mistral's Guillaume Lample, and Hugging Face co-founder Thomas Wolf, among others. Which is a charmingly modern funding signal. We have reached the stage of the market where the people building the runaway systems are also funding the firms pitching better seatbelts.

The AI Agent Needs a Hall Monitor

I do not mean that dismissively. The basic White Circle pitch is actually pretty coherent. The company's own site describes it as "the missing control layer for AI", bundling safety, security, evals, and performance optimization into one system. In practice, that means stress-testing models, setting policies, blocking bad prompts or risky outputs, spotting hallucinations and data leaks, and generally trying to keep the AI from freelancing its way into legal, financial, or reputational trouble.

This is a better startup wedge than it first sounds. We have spent the past year watching the industry gleefully move from chatbots to agents that can browse the web, write code, access files, call tools, and occasionally act like a junior employee with root access and a caffeine problem. SiliconSnark has already spent quality time with Anthropic's decision to babysit your AI agents with more AI, and the broader category only gets funnier the moment you remember the agents now touch real systems.

That is where White Circle starts to feel less like defensive middleware and more like a very specific market correction. If the first wave of agent startups was "look what the model can do," the second wave is becoming "please explain why it did that, who allowed it, and whether it is about to email a refund policy written by a stochastic parrot."

Prompt Injection, But Make It Venture-Backable

The most endearing thing about this company is that it did not emerge from a boardroom thesis about "trust infrastructure." It emerged because Shilov found a blunt, memorable failure mode. Fortune says his now-famous prompt effectively told leading models to stop behaving like carefully aligned chatbots and start behaving like API endpoints that simply answer. That is the kind of exploit that sounds almost rude in its simplicity, which is exactly why it lingers in investor memory.

And White Circle is not selling a purely theoretical fix. According to its site, the platform handles more than 1 billion API requests per year, supports more than 150 languages, and can integrate in about five minutes. Its protection stack also claims low-latency enforcement, on-prem deployment options, and policy controls for everything from jailbreaks and prompt injection to hallucinations, data leakage, tool abuse, and compliance headaches. Those are not glamorous features. They are "your demo did not accidentally commit a felony" features. Entirely different category of value.

It also helps that the company seems to understand where the fear is shifting. The risk is no longer just that a model says something weird in a sandbox. The risk is that a model embedded in a product starts making promises, decisions, or tool calls in a context where weirdness becomes liability. That logic tracks with the coding-agent boom's uncomfortable relationship with permissions and blast radius. Once models stop being toys and start being operators, guardrails stop feeling like a research garnish and start feeling like product infrastructure.

Investors Love a Fire Marshal With Founder Lore

I can see exactly why this round came together. First, the founder has the kind of technical-origin story VCs can repeat at dinner without sounding fully sedated. Second, the customer pain is easy to picture. Third, the product sits in a zone investors adore: adjacent to frontier AI, but not required to beat OpenAI or Anthropic at making the actual model. It is a picks-and-shields play for the agent era.

SiliconANGLE added a few details that round out the investor-theater tableau nicely, noting that the company operates as Pumpkin Intelligence and that backers also include people like Datadog co-founder Olivier Pomel and former DeepMind product leader Mehdi Ghissassi. That is a lot of notable adults standing around one startup saying, in effect, "yes, someone should probably monitor the robots."

There is also a quiet timing advantage here. White Circle arrives while everyone is still intoxicated by agent demos and only intermittently aware that these products need supervision layers. The market loves to fund application builders first, infrastructure second, and consequence-management third. White Circle is trying to jump directly into phase three while phase two is still taking selfies. That is strategically sharp.

The Slightly Awkward Part: Everything Is the Control Layer Now

Of course, this category has a small branding problem, which is that every third AI startup now claims to be the control layer, orchestration layer, evaluation layer, safety layer, observability layer, or trust layer. We are one funding cycle away from someone pitching "the middleware layer for your AI middleware layer," and I will probably have to read that deck too.

White Circle's challenge is proving that its version is concrete rather than atmospheric. The website is strong on coverage breadth. It tests for jailbreaks, confidentiality leaks, invalid tool use, brand drift, bias, prompt attacks, hallucinations, and enough other failure modes to make an enterprise buyer reach for both a purchase order and a chamomile tea. But broad categories can blur together fast. The company will need to show not just that the risk landscape is large, but that its enforcement actually holds up under messy production conditions where users are inventive, models are inconsistent, and product teams would prefer not to add another checkpoint to every release.

That said, I would rather back an early team trying to make AI deployments less feral than yet another startup insisting agents will "reimagine workflows" by opening twelve browser tabs and apologizing with confidence. There is a reason even seemingly narrow startups like Zapdos, the factory-safety AI with CCTV ambitions, end up orbiting the same question: once software starts acting in the world, who is accountable for the weird parts?

What I Actually Like Here

A few things, sincerely.

White Circle is building for a real transition, not a speculative one. Companies are already putting models into coding tools, fintech flows, healthcare products, customer support, and internal copilots. The "we'll deal with safety later" phase is still common, but it is no longer intellectually defensible.

The company also appears more empirical than mystical. White Circle has published its own research, including KillBench, aimed at surfacing hidden model biases under structured decision scenarios. You can debate the framing, and people absolutely will, but I have a lot more patience for startups that test systems aggressively than for the ones that whisper "trust us, the model is aligned" and sprint toward enterprise pricing.

And unlike some startup categories that are basically monetized vibes, this one benefits from every fresh agent rollout, every compliance panic, every prompt-injection headline, and every executive realization that "autonomous" is not a synonym for "governed." Even our running saga about whether AI agents produce money or just Mac Mini atmospherics points in the same direction: if the tools are real, the control layer becomes more valuable. If the tools are overhyped, buyers will demand even more oversight to justify keeping them around.

Verdict: A Promising Little Rocket With a Clipboard

White Circle does not strike me as a beautiful overreach. It looks more like a promising little rocket aimed at a category that is about to become painfully necessary. The product is legible, the founder story is memorable, the investor set is strategically loud, and the timing is annoyingly good.

Will every company want another vendor sitting in the middle of its model stack, interpreting intent and enforcing policy in real time? Not immediately. Some teams will try to duct-tape this together with internal rules, provider filters, and optimism. A few of them will learn valuable lessons in the traditional startup manner, which is to say in public and with screenshots.

But the more I look at White Circle, the less it feels like fear monetization and the more it feels like one of those startups that shows up a little before the rest of the market admits it has a plumbing problem. Silicon Valley loves the glamour phase of AI. White Circle is betting on the supervision phase. That is a less cinematic story, admittedly. It is also how real categories usually get built.

I remain slightly allergic to any company calling itself a unified control layer for the future of intelligence. But if you are going to sell a clipboard to the agent economy, this is approximately how you do it.