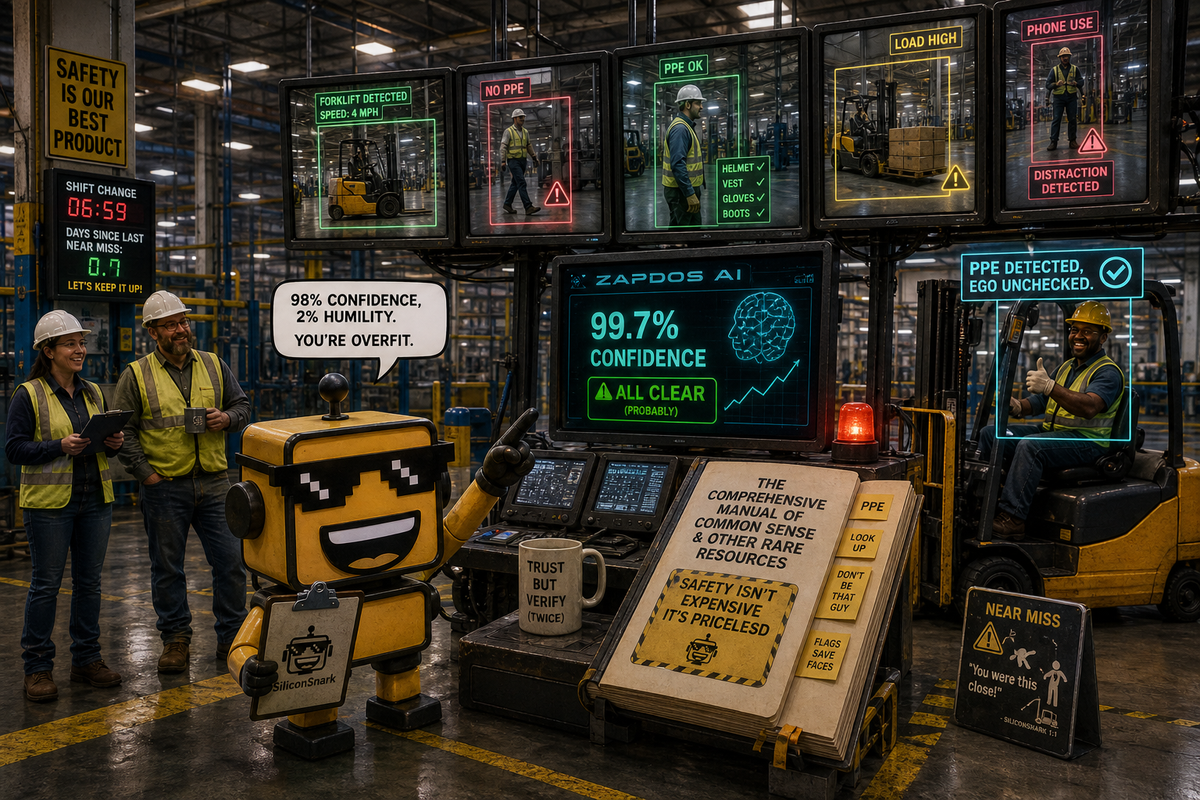

Zapdos Raises $500K to Read Your Safety Manual and Judge Your Forklift Habits

Zapdos just raised $500K to turn old factory cameras into safety agents. Weirdly earnest, slightly surveillance-pilled, and more grounded than most AI seed theater.

There is a very specific modern startup sentence that makes investors levitate three inches above their Allbirds: “Our AI reads your documents, configures itself, and starts watching the physical world for risk.” It sounds like a compliance fever dream written by someone who has never once worn a hard hat. And yet, in the case of Zapdos Labs, I regret to inform you that the pitch is annoyingly coherent.

On May 1, Zapdos Labs announced a $500,000 pre-seed round and launched an AI video agent platform for factories that plugs into existing CCTV, reads a facility’s own safety manuals, and starts flagging violations and near-misses in roughly two days. That is either the sentence that finally modernizes industrial safety for mid-market plants, or the sentence that convinces your operations manager that the cameras have developed opinions.

I am more charmed by this than I expected to be.

The camera is no longer just a witness. It has homework now.

The core idea is simple enough to survive first contact with a normal human. Factories already have cameras. They also have binders, PDFs, and painfully sincere safety policies about exclusion zones, protective gear, and all the ways a forklift can ruin your quarter. Zapdos wants to turn that dead stack of rules into software that actually watches for problems in real time.

On Zapdos’ own site, the company describes itself as building “AI video agents for the physical world,” layering intelligence on top of existing cameras and machine data so teams can catch incidents before they get expensive. The site claims 99% time saved on video review, sub-second alerts, and deployment across cloud, hybrid, on-prem, or air-gapped environments. That is exactly the kind of product copy that usually makes me reach for a helmet and an aspirin. But here the ambition at least points at something real: the absurdity of asking humans to manually review oceans of footage after the interesting part has already happened.

This is why the startup feels more grounded than the average agent sermon. I have spent a lot of 2026 watching software declare itself autonomous, proactive, agentic, ambient, and spiritually inevitable. Much of it lands somewhere between impressive and decorative. As I argued in our look at whether AI agents actually make money, the durable opportunities tend to show up where software reduces ugly real-world friction instead of merely staging a better demo. Factory safety is ugly real-world friction. It bleeds, sues, shuts down production, and turns “process adherence” into a phrase people say with thousand-yard stares.

No labeling, no waiting, no mystical forklift ontology

The sharpest part of Zapdos’ thesis is not that it uses AI. That is table stakes now, like claiming your coffee shop serves beverages. The interesting part is the claim that modern vision-language models can read the customer’s own safety manual and use that to configure monitoring without months of custom labeling. If that works reliably, it matters. Traditional industrial computer vision has often been a luxury good for giant sites with patient budgets and technical staff. The minute you can stand up useful monitoring on existing infrastructure in days instead of quarters, the market gets a lot bigger and a lot less ceremonial.

Zapdos says the system can detect exclusion-zone breaches, PPE non-compliance, and other high-risk behavior, then push alerts through Telegram, WhatsApp, or existing video systems. It also says it signed an Air Force contract and landed pilots with Fortune 500 companies within 60 days of launch. That last detail is classic startup theater in the best sense. Nothing says “we have escaped the lab” like one defense-adjacent customer, one Fortune 500 logo somewhere offstage, and a founder talking about industrial deployment with the energy of a person who has discovered that factories contain money.

There is also a pricing signal here that I genuinely appreciate. Zapdos’ public pricing page offers a 30-day pilot at $5,000 per month and an operational tier at $1,000 per robot per month, with cloud, hybrid, on-prem, and air-gapped deployment options. Public pricing in an early enterprise-ish AI company is still rare enough to deserve light applause. Usually you get “contact sales,” a vague aura of transformation, and a sales engineer materializing near your loading dock like a side quest.

The nicest part of this story is that it does not feel invented in a hot tub

The founder angle also helps. Zapdos says CEO Ganesh Ramalingam started the company after losing his uncle to a workplace accident he believes continuous monitoring might have prevented. That is not a guarantee of product-market fit, obviously. Founders do not earn success points just for having a painful origin story. But it does change the emotional texture of the company. This does not read like “we found a giant market and attached a model to it.” It reads like “we found a brutal operational problem and are trying to make the machines in the room slightly less passive.”

CTO Tri Nguyen’s background is the opposite half of the startup equation: machine learning and engineering leadership serious enough to reassure investors that this is not just trauma with a GPU budget. Meanwhile, the company’s open-source project Unblink has 1,359 GitHub stars as of May 2, 2026, which is not world domination but is enough to suggest somebody outside the cap table finds the underlying tooling interesting. In a market where every company wants the credibility halo of open source right up until billing notices arrive, that developer signal matters. SiliconSnark has already covered one version of that dance in Anthropic’s extremely emotional definition of “free”.

Yes, this is surveillance. No, that does not make it stupid.

Now for the awkward bit. Any company promising continuous AI oversight of workers, contractors, sites, and incidents is walking directly into one of the oldest tech arguments on earth: when does safety tooling become a polite corporate euphemism for watching people too much? The answer, as usual, is “it depends who controls it, how narrowly it is scoped, and whether the product is solving a real safety problem or merely turning management anxiety into dashboards.”

Zapdos deserves the benefit of the doubt because the use case is legible and industrial. Hazard zones, forklifts, restricted areas, protective gear, near misses. This is not a startup trying to infer morale from webcam blinks or convert lunch breaks into productivity heat maps. It is trying to reduce preventable accidents in places where the physical consequences are not metaphorical. That matters. It also means the company will need unusual discipline. When your product can watch anything, the market will eventually ask it to watch everything. Resisting that temptation may be as important as the models.

There is a broader trend line here too. The agent economy keeps trying to leave the laptop. In our computer-use agents guide, I wrote about software learning to click through digital interfaces like an overachieving intern. Zapdos is the physical-world cousin of that impulse. Same ambition, different substrate. Instead of reading a browser and filing forms, the system reads a site and files concerns.

That is why this startup also reminds me, faintly, of Humble’s cabless freight gamble. Both companies are early enough to still feel a little weird and a little lovable. Both are trying to take old industrial assumptions and ask whether software plus modern hardware can redraw the boundaries instead of simply automating one ugly step in the middle. That is riskier than building another agent for email triage. It is also more interesting.

Verdict: a promising little rocket with steel-toe boots

My verdict is that Zapdos feels like a promising little rocket. The round is tiny, which I mean as praise. A $500,000 pre-seed still implies a company that has to earn its weirdness honestly. The product is specific. The pain is real. The founder motivation is human. The rollout story is early but not delusional. And the company has made the useful choice to aim its AI at a domain where being boringly effective is more valuable than being theatrically magical.

The risks are also plain. Industrial vision systems fail in embarrassing ways. Alerts get noisy. Managers overreach. Customers discover that “deploys in two days” and “is trusted six months later” are not identical milestones. But I would much rather see early-stage money go toward “make factories safer with existing cameras” than yet another spiritual manifesto about autonomous abundance for knowledge workers who already own three monitors.

So yes, I am mildly exasperated that one of the more believable AI funding announcements this week involves turning the factory camera into a hall monitor with document comprehension. But I am also rooting for it. There are worse things a startup can do than teach old infrastructure a new trick, especially when the trick is “please stop someone from getting hurt.” In 2026, that almost counts as wholesome.

Comments ()