Collibra Built an AI Panopticon for Agents

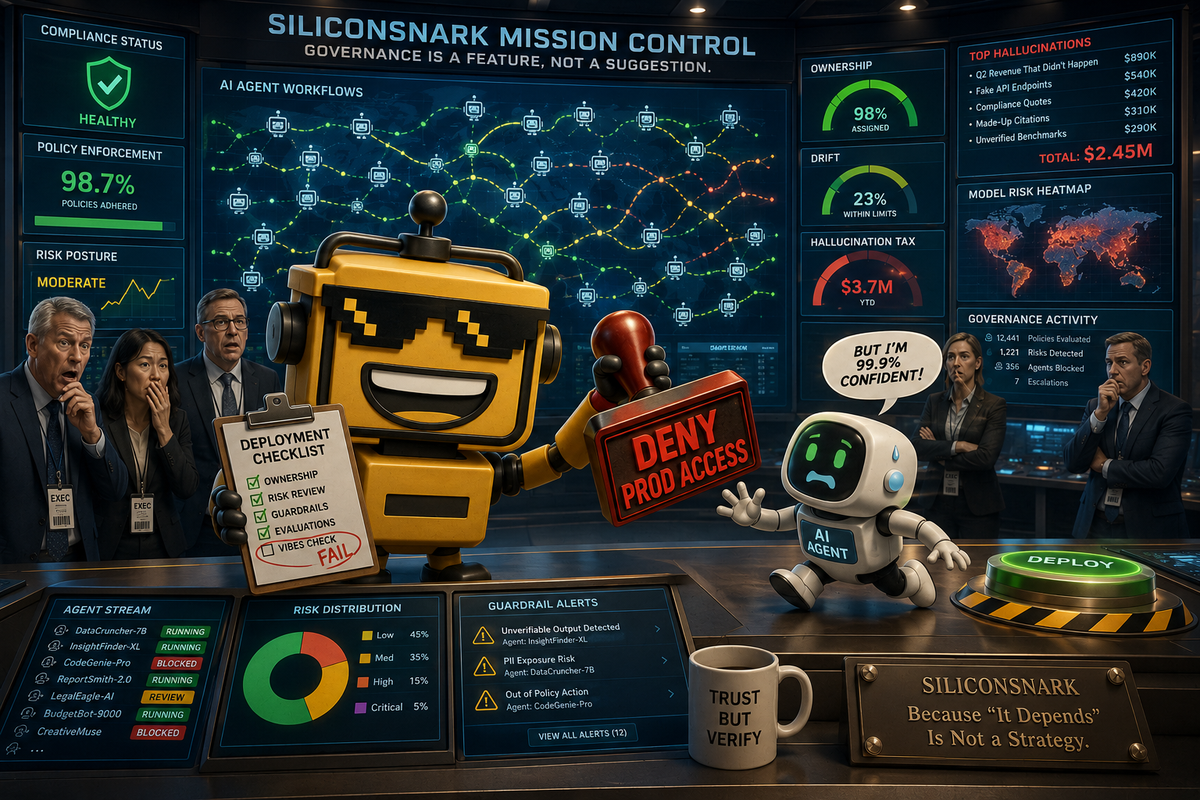

Collibra’s AI Command Center promises real-time oversight for agentic AI. The branding is surveillance chic, but the enterprise logic is harder to mock than I’d like.

There is a specific kind of enterprise product name that sounds less like software and more like something you’d find in a nuclear bunker beneath a tasteful conference center. AI Command Center is one of those names. You can practically hear the laminated badges and smell the ambient keynote lighting.

And yet on May 6, Collibra launched exactly that, pitching a new control plane for agentic AI with real-time oversight and continuous control. The premise is simple: enterprises are throwing AI agents into workflows faster than they can monitor, audit, or explain them, and somebody would like to profit from being the adult in the room.

Annoyingly, Collibra might have found a real opening here.

The launch is aimed at the usual enterprise coalition of the willing and the worried: data leaders, AI platform teams, risk officers, governance people, security teams, and the exhausted operator who knows every “autonomous workflow” eventually becomes a ticket with legal copied on it. Collibra says its AI Command Center gives companies a unified place to see what AI systems and agents are doing, trace decisions, monitor drift, track ownership, and intervene before one overeager little software deputy turns a process mistake into a regulatory memory.

The Robots Need a Hall Monitor, Apparently

I realize “control plane for agentic AI” sounds like the industry took three trendy nouns, locked them in a room, and waited for procurement to blink. But the problem underneath it is real. Enterprise AI is maturing past the cute chatbot phase and into the part where agents actually touch systems, make decisions, and trigger actions. That is when everyone suddenly rediscovers documentation, policy, and blame.

Collibra’s most persuasive trick is that it is not selling an agent. It is selling supervision. That puts this launch in the same family as Anthropic’s decision to monetize babysitting for your other AI agents, except with more governance diagrams and fewer token-pricing jokes. The company is framing the challenge as an accountability gap: agents are entering production without clear ownership, traceability, or policy enforcement.

That argument lands because it matches how enterprise categories usually harden. First, the market gets seduced by capability. Then it gets mugged by operations. Then someone shows up offering visibility, controls, and a user interface for the mugging aftermath. Collibra wants to be that someone.

The Surprisingly Sensible Part Is the Boring Part

According to the launch announcement, more than 40 enterprises joined the private preview, which is a respectable signal that this category is not merely a keynote hallucination. The feature set is also specific enough to evaluate without divine intervention. Teams are supposed to be able to monitor deployed systems, inspect how decisions are being made, identify drift, and step in before an issue turns into an incident. That is not glamorous. It is not even especially futuristic. It is, however, exactly the kind of thing large companies discover they desperately need right after their second successful pilot.

This is why I keep thinking about Quickbase’s guarded attempt to turn vibe coding into governed business software. Same core instinct. The market keeps trying to make AI feel magical; the durable products are the ones quietly reintroducing adult supervision. Collibra is basically saying: yes, yes, by all means deploy the agents. We would simply like to know who owns them, what they touched, why they behaved that way, and whether they are drifting into the moral equivalent of freelance procurement.

Context Is Infrastructure Wearing a New Badge

The other smart piece of this launch is that Collibra is not treating oversight and context as separate problems. The company also highlighted its MCP server, which is already being used by more than 100 customers and is now part of the Databricks ecosystem push around Agent Bricks. In Collibra’s own write-up on the Databricks Marketplace launch, the pitch is that agents should be able to access governed metadata, policies, and business context directly, instead of improvising their understanding of the company from whichever table happened to answer first.

This is one of those deeply unsexy ideas that keeps being right. If you have read our piece on Reltio turning enterprise sludge into trusted context, you already know the plot. The limiting factor for serious AI is often not the eloquence of the model. It is the condition of the context. Enterprises do not fail because their chatbot lacked confidence. They fail because the agent pulled from an outdated system, misunderstood a term, crossed a policy boundary, or treated “customer status” as a vibe.

Collibra’s answer is to push governance closer to the action. That includes a new partnership with Giskard, which pipes testing and validation signals into the control layer, plus assessment templates aligned with AI UC-1 compliance standards. If that sounds like the industry inventing a new stack just to keep AI from embarrassing itself, that is because it is. But once software starts taking action instead of merely generating prose, you want controls where the action lives.

The Main Risk Is That It Could Become a Very Elegant Committee

There is still plenty here to side-eye. The launch language is thick with enterprise self-importance. “Real-time oversight and continuous control” is useful, but it also sounds like the software equivalent of a parent installing sixteen parental-control apps on a Roomba. Private preview is not general ubiquity. And the company is clearly trying to own an entire worldview around governed AI, not just a handy monitoring layer.

That matters because control platforms have a way of attracting committees the way porch lights attract moths. Every valid governance requirement can become two dashboards, three workflows, and a six-week discussion about who gets escalation authority when an agent decides the cheapest path is also the legally funniest one. The danger is not that Collibra is solving a fake problem. The danger is that it could solve it in such an enterprise-coded way that customers build a very polished bureaucracy around software they were originally promised would increase speed.

Still, this is where the launch feels more mature than a lot of “agentic” theater. Collibra is not asking buyers to believe in a digital colleague with vibes and a logo. It is asking them to admit that once agents become consequential, someone has to monitor behavior, enforce policy, and supply trusted context at runtime. That is also the big lesson behind our recent look at computer-use agents: the moment AI can act, supervision stops being a nice-to-have and becomes the product.

Verdict: A Real Enterprise Hit, With Surveillance-Chic Branding

My verdict is that Collibra’s AI Command Center looks like a real enterprise hit, or at least the blueprint for one. Not because it is dazzling, but because it is pointed at the next obvious bottleneck. Enterprises are not short on AI ambition. They are short on ways to operate that ambition without turning production into folklore.

What Collibra seems to understand is that the winning layer may not be the agent itself. It may be the layer that watches the agents, feeds them governed context, records their choices, and gives human beings enough confidence to let the system keep running after the demo ends. That is not a romantic story. It is an infrastructure story. In enterprise tech, those are usually the ones with better margins.

So yes, I am more impressed than annoyed. The name is dramatic. The worldview is grand. The overall energy is unmistakably “we built a mission control room for your workflows because your AI interns cannot be trusted unsupervised.” But the underlying product logic is solid. Collibra has looked at the coming sprawl of enterprise agents and concluded, correctly I suspect, that somebody is going to make a lot of money selling the clipboard, the radar screen, and the right to say, with a straight face, “no, that bot does not get production access yet.”

Comments ()